Chapter 10,11 Indexes - PowerPoint PPT Presentation

Title:

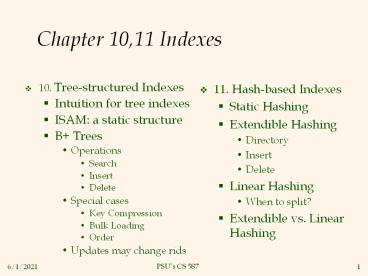

Chapter 10,11 Indexes

Description:

Perform inserts and deletes on Btrees, extndible and linear hash indexes ... Insert: Find leaf data entry belongs to, and put it there. Perhaps in an overflow page ... – PowerPoint PPT presentation

Number of Views:43

Avg rating:3.0/5.0

Title: Chapter 10,11 Indexes

1

Chapter 10,11 Indexes

- 10. Tree-structured Indexes

- Intuition for tree indexes

- ISAM a static structure

- B Trees

- Operations

- Search

- Insert

- Delete

- Special cases

- Key Compression

- Bulk Loading

- Order

- Updates may change rids

- 11. Hash-based Indexes

- Static Hashing

- Extendible Hashing

- Directory

- Insert

- Delete

- Linear Hashing

- When to split?

- Extendible vs. Linear Hashing

2

Learning Objectives

- Describe the pros and cons of ISAM

- Perform inserts and deletes on Btrees, extndible

and linear hash indexes - For Btrees Describe algorithms for bulk loading

and key compression - Explain why hash indexes are rarely used

3

Indexes in Real DBMSs

- SQLServer, Oracle, DB2 Btree only

- Postgres Btree, Hash (discouraged)

- gist

- http//gist.cs.berkeley.edu

- Generalized index type

- Models RTrees and other indexes

- gin

- http//www.sai.msu.su/megera/oddmuse/index.cgi/Gi

n - primarily text search

- MySql Depends on storage engine. Mainly Btree,

some hash, Rtree - Bottom Line Hash indexes are rare

- RTrees index rectangles and higher dimensional

structures

4

10.1 Intuition

10. Trees

- Find all students with gpa gt 3.0

- If data is in sorted file, do binary search to

find first such student, then scan to find

others. - Cost of binary search on disk can be high.

- 2 Simple ideas

- Create an index file.

- Use a large fanout F since each dereference is

.

Index File

kN

k2

k1

Data File

Page N

Page 1

Page 3

Page 2

- Can do binary search on (smaller) index file!

5

ISAM

10. Trees

index entry

P

K

P

K

P

P

K

m

0

1

2

1

m

2

- Index file may still be quite large. But we can

apply the idea repeatedly!

Non-leaf

Pages

Leaf

Pages

Primary pages

- Leaf pages contain data entries.

6

Review of Indexes

10. Trees

- As for any index, 3 alternatives for data entries

k - Data record with key value k

- ltk, rid of data record with search key value kgt

- ltk, list of rids of data records with search key

kgt - Choice is orthogonal to the indexing technique

used to locate data entries k. - Tree-structured indexing techniques support both

range searches and equality searches. - ISAM static structure B tree dynamic,

adjusts gracefully under inserts and deletes.

7

Comments on ISAM

Data Pages

- File creation Leaf (data) pages allocated

sequentially, sorted by search key

then index pages allocated, then space for

overflow pages. - Index entries ltsearch key value, page idgt

they direct search for data entries, in leaf

pages. - Search Start at root use key comparisons to go

to leaf. Cost log F N F entries/index

pg, N leaf pgs - Insert Find leaf data entry belongs to, and put

it there. - Perhaps in an overflow page

- Delete Find and remove from leaf if empty

overflow page, de-allocate. - Thus data pages remain sequential/continguous

Index Pages

Overflow pages

- Static tree structure inserts/deletes affect

only leaf pages.

8

Example ISAM Tree

10. Trees

- Each node can hold 2 entries no need for

next-leaf-page pointers. (Why?)

9

After Inserting 23, 48, 41, 42 ...

10.Trees

Root

40

Index

Pages

20

33

51

63

Primary

Leaf

46

55

10

15

20

27

33

37

40

51

97

63

Pages

41

48

23

Overflow

Pages

42

10

... Then Deleting 42, 51, 97

10. Trees

Root

40

20

33

51

63

46

55

10

15

20

27

33

37

40

63

41

48

23

- Note that 51 appears in index levels, but not

in leaf!

11

Pros and Cons of ISAM

- Cons

- After many inserts and deletes, long overflow

chains can develop - Overflow records may not be sorted

- Pros

- Inserts and deletes are fast since theres no

need to balance the tree - No need to lock nodes of the original tree for

concurrent access - If the tree has had few updates, then interval

queries are fast.

12

B Tree Most Widely Used Index

10. Trees

- Insert/delete at log F N cost keep tree

height-balanced. (F fanout, N leaf pages) - Minimum 50 occupancy (except for root). Each

node contains d lt m lt 2d entries. The

parameter d is called the order of the tree. - This ensures that the height is relatively small

- Supports equality and range-searches efficiently.

13

Example B Tree

10. Trees

- Search begins at root, and key comparisons direct

it to a leaf (as in ISAM). - Search for 5, 15, all data entries gt 24 ...

- Based on the search for 15, we know it is not

in the tree!

14

Inserting a Data Entry into a B Tree

10. Trees

- Find correct leaf L.

- Put data entry onto L.

- If L has enough space, done!

- Else, must split L (into L and a new node L2)

- Redistribute entries evenly, copy up middle key.

- Insert index entry pointing to L2 into parent of

L. - This can happen recursively

- To split index node, redistribute entries evenly,

but push up middle key. (Contrast with leaf

splits.) - Splits grow tree root split increases height.

- Tree growth gets wider or one level taller at

top.

15

Inserting 8 into Example B Tree

10. Trees

Entry to be inserted in parent node.

- Observe how minimum occupancy is guaranteed in

both leaf and index pg splits. - Note difference between copy-up and push-up be

sure you understand the reasons for this.

(Note that 5 is

s copied up and

5

continues to appear in the leaf.)

3

5

2

7

8

appears once in the index. Contrast

16

Example B Tree After Inserting 8

10. Trees

Root

17

24

30

13

5

2

3

39

19

20

22

24

27

38

7

5

8

14

16

29

33

34

- Notice that root was split, leading to increase

in height.

- In this example, we can avoid split by

re-distributing entries however,

this is usually not done in practice.

17

Deleting a Data Entry from a B Tree

10. Trees

- Start at root, find leaf L where entry belongs.

- Remove the entry.

- If L is at least half-full, done!

- If L has only d-1 entries,

- Try to re-distribute, borrowing from sibling

(adjacent node with same parent as L). - If re-distribution fails, merge L and sibling.

- If merge occurred, must delete entry (pointing to

L or sibling) from parent of L. - Merge could propagate to root, decreasing height.

18

Example Tree After (Inserting 8, Then) Deleting

19 and 20 ...

10. Trees

Root

17

27

30

13

5

2

3

39

38

7

5

8

22

24

27

29

14

16

33

34

- Deleting 19 is easy.

- Deleting 20 is done with re-distribution. Notice

how middle key is copied up.

19

... And Then Deleting 24

10. Trees

- Must merge.

- Observe toss of index entry (on right), and

pull down of index entry (below).

30

39

22

27

38

29

33

34

Root

13

5

30

17

3

39

2

7

22

38

5

8

27

33

34

14

16

29

20

Example of Non-leaf Re-distribution

10. Trees

- Tree is shown below during deletion of 24. (What

could be a possible initial tree?) - In contrast to previous example, can

re-distribute entry from left child of root to

right child.

21

After Re-distribution

10. Trees

- Intuitively, entries are re-distributed by

pushing through the splitting entry in the

parent node. - It suffices to re-distribute index entry with key

20 weve re-distributed 17 as well for

illustration.

Root

17

13

5

30

22

20

39

7

5

8

2

3

38

17

18

33

34

22

27

29

20

21

14

16

22

B Trees in Practice

10. Trees

- Typical values for B tree parameters

- Page size 8K

- Key at most 8 bytes (compression later)

- Pointer at most 4 bytes

- Thus entries in index are at most 12 bytes, and a

page can hold at least 683 entries. - Occupancy 67, so a page can hold at least 455

entries, estimate that conservatively with 256

28. - Top two levels often in memory

- Top level, root of tree 1 page 8K bytes

- Next level, 28 pages 28 23K bytes 2

Megabytes

23

B-Trees vs Hash Indexes

10. Trees

- A typical B-tree height is 2-3

- Height 0 supports 28 256 records

- Height 2 supports 224 32M records

- Height 3 supports 232 4G records

- A B-tree of height 2-3 requires 2-3 I/Os

- Including one I/O to access data

- Assuming top two levels are in memory

- Assuming alternative 2 or 3

- This is why DBMSs either dont support or dont

recommend hash indexes on base tables - Though hashing is widely used elsewhere.

24

Prefix Key Compression

10. Trees

- Important to increase fan-out. (Why?)

- Key values in index entries only direct

traffic can often compress them. - E.g., If we have adjacent index entries with

search key values Dannon Yogurt, David Smith and

Devarakonda Murthy, we can abbreviate David Smith

to Dav. (The other keys can be compressed too

...) - Is this correct? Not quite! What if there is a

data entry Davey Jones? (Can only compress David

Smith to Davi) - In general, while compressing, must leave each

index entry greater than every key value (in any

subtree) to its left. - Insert/delete must be suitably modified.

25

Bulk Loading of a B Tree

10. Trees

- If we have a large collection of records, and we

want to create a B tree on some field, doing so

by repeatedly inserting records is very slow. - Bulk Loading can be done much more efficiently.

- Initialization Sort all data entries, insert

pointer to first (leaf) page in a new (root) page.

Root

Sorted pages of data entries not yet in B tree

26

Bulk Loading (Contd.)

10. Trees

- Index entries for leaf pages always entered into

right-most index page just above leaf level.

When this fills up, it splits. (Split may go up

right-most path to the root.) - Much faster than repeated inserts, especially

when one considers locking!

27

Summary of Bulk Loading

10. Trees

- Option 1 multiple inserts.

- Slow.

- Does not give sequential storage of leaves.

- Option 2 Bulk Loading

- Has advantages for concurrency control.

- Fewer I/Os during build.

- Leaves will be stored sequentially (and linked,

of course). - Can control fill factor on pages.

28

A Note on Order

10. Trees

- Order (d) concept replaced by physical space

criterion in practice (at least half-full). - Index pages can typically hold many more entries

than leaf pages. - Variable sized records and search keys mean

different nodes will contain different numbers of

entries. - Even with fixed length fields, multiple records

with the same search key value (duplicates) can

lead to variable-sized data entries (if we use

Alternative (3)).

29

10.8.4 Effect of Inserts and Deletes on RIDs

- The text raises this problem

- Suppose there is an index using alternative 1.

- As happens with SQLServer and Oracle if a primary

index is declared on a table. - RIDs will change with updates and deletes.

- Why? Splits and merges.

- Then pointers in other, secondary, indexes will

be wrong. - Text suggests that index pointers can be updated.

- This is impractical.

- What do SQL Server and Oracle do?

- They use logical RIDs in secondary indexes.

30

Logical Pointers in Data Entries

- What is a logical pointer?

- A primary key value

- For example, an Employee ID

- Thus a data entry for an age index might be

lt42,C24gt - 42 is the age, C24 is the ID of an employee aged

42. - To find that employee with age 42, must use the

primary key index! - This approach makes primary key indexes faster

(alternative 1 instead of 2) but secondary key

indexes slower.

31

11. Hash-based Indexes review

11.Hash

- As for any index, 3 alternatives for data entries

k - Data record with key value k

- ltk, rid of data record with search key value kgt

- ltk, list of rids of data records with search key

kgt - Choice orthogonal to the indexing technique

- Hash-based indexes are best for equality

selections. Cannot support range searches. - Static and dynamic hashing techniques exist

trade-offs similar to ISAM vs. B trees.

32

Static Hashing

11.Hash

- primary pages fixed, allocated sequentially,

never de-allocated overflow pages if needed. - h(k) mod M bucket to which data entry with key

k belongs. (M of buckets)

0

h(key) mod M

1

key

h

M-1

Primary bucket pages

Overflow pages

33

Static Hashing (Contd.)

11.Hash

- Buckets contain data entries.

- Hash fn works on search key field of record r.

Must distribute values over range 0 ... M-1. - h(key) (a key b) usually works well.

- a and b are constants lots known about how to

tune h. - Long overflow chains can develop and degrade

performance. - Extendible and Linear Hashing Dynamic techniques

to fix this problem.

34

11.2 Extendible Hashing

11.Hash

- Situation Bucket (primary page) becomes full.

Why not re-organize file by doubling of

buckets? - Reading and writing all pages is expensive!

- Idea Use directory of pointers to buckets,

double of buckets by doubling the directory,

splitting just the bucket that overflowed! - Directory much smaller than file, so doubling it

is much cheaper. Only one page of data entries

is split. No overflow page! - Trick lies in how hash function is adjusted!

35

Insert Example

2

LOCAL DEPTH

Bucket A

16

4

12

32

GLOBAL DEPTH

2

2

Bucket B

13

00

1

21

5

- Directory is array of size 4.

- To find bucket for r, take last global depth

bits of h(r) we denote r by h(r). - If h(r) 5 binary 101, it is in bucket

pointed to by 01.

01

2

10

Bucket C

10

11

2

DIRECTORY

Bucket D

15

7

19

DATA PAGES

- Insert If bucket is full, split it (allocate

new page, re-distribute).

- If necessary, double the directory. (As we will

see, splitting a - bucket does not always require doubling we

can tell by - comparing global depth with local depth for

the split bucket.)

36

Insert h(r)20 (Causes Doubling)

11.Hash

2

LOCAL DEPTH

3

LOCAL DEPTH

Bucket A

16

32

GLOBAL DEPTH

32

16

Bucket A

GLOBAL DEPTH

2

2

2

3

Bucket B

1

5

21

13

00

1

5

21

13

000

Bucket B

01

001

2

10

2

010

Bucket C

10

11

10

Bucket C

011

100

2

2

DIRECTORY

101

Bucket D

15

7

19

15

19

7

Bucket D

110

111

2

3

Bucket A2

20

4

12

DIRECTORY

20

12

Bucket A2

4

(split image'

of Bucket A)

(split image'

of Bucket A)

37

Points to Note

11.Hash

- 20 binary 10100. Last 2 bits (00) tell us r

belongs in A or A2. Last 3 bits needed to tell

which. - Global depth of directory Max of bits needed

to tell which bucket an entry belongs to. - Local depth of a bucket of bits used to

determine if an entry belongs to this bucket. - When does bucket split cause directory doubling?

- Before insert, local depth of bucket global

depth. Insert causes local depth to become gt

global depth directory is doubled by copying it

over and fixing pointer to split image page.

(Use of least significant bits enables efficient

doubling via copying of directory!)

38

Performance, Deletions

11.Hash

- If directory fits in memory, equality search

answered with one disk access else two. - 100MB file, 100 bytes/rec, contains 1,000,000

records (as data entries). If pages are 4K then

the file requires 25,000 directory elements

chances are high that directory will fit in

memory. - Directory grows in spurts, and, if the

distribution of hash values is skewed, directory

can grow large. - Multiple entries with same hash value cause

problems! - Delete If removal of data entry makes bucket

empty, can be merged with split image. If each

directory element points to same bucket as its

split image, can halve directory.

39

11.3 Linear Hashing

11.Hash

- This is another dynamic hashing scheme, an

alternative to Extendible Hashing. - LH handles the problem of long overflow chains

without using a directory, and handles

duplicates. - Idea Use a family of hash functions h0, h1,

h2, ... - hi(key) h(key) mod(2iN) N initial buckets

- h is some hash function (range is not 0 to N-1)

- If N 2d0, for some d0, hi consists of applying

h and looking at the last di bits, where di d0

i. - hi1 doubles the range of hi (similar to

directory doubling)

40

Linear Hashing (Contd.)

11.Hash

- Directory avoided in LH by using overflow pages,

and choosing bucket to split round-robin. - Splitting proceeds in rounds. Round ends when

all NR initial (for round R) buckets are split.

Buckets 0 to Next-1 have been split Next to NR

yet to be split. - Current round number is Level.

- Search To find bucket for data entry r, find

hLevel(r) - If hLevel(r) in range Next to NR , r belongs

here. - Else, r could belong to bucket hLevel(r) or

bucket hLevel(r) NR must apply hLevel1(r) to

find out.

41

Overview of LH File

11.Hash

- In the middle of a round.

Buckets split in this round

Bucket to be split

h

search key value

)

(

If

Level

Next

is in this range, must use

search key value

)

(

h

Level1

Buckets that existed at the

to decide if entry is in

beginning of this round

split image' bucket.

this is the range of

h

Level

split image' buckets

created (through splitting

of other buckets) in this round

42

When to split?

11.Hash

- Insert Find bucket by applying hLevel /

hLevel1 - If bucket to insert into is full

- Add overflow page and insert data entry.

- (Maybe) Split Next bucket and increment Next.

- Can choose any criterion to trigger split.

- Since buckets are split round-robin, long

overflow chains dont develop! - Doubling of directory in Extendible Hashing is

similar switching of hash functions is implicit

in how the of bits examined is increased.

43

Example of Linear Hashing

11.Hash

- On split, hLevel1 is used to re-distribute

entries.

Level0, N4

PRIMARY

h

h

0

1

PAGES

Next0

32

44

36

00

000

Data entry r

25

9

5

with h(r)5

01

001

30

14

18

10

Primary

10

010

bucket page

31

35

11

7

011

11

(This info is for illustration only!)

(The actual contents of the linear hashed file)

44

Example End of a Round

11.Hash

Level1

PRIMARY

OVERFLOW

h

h

PAGES

0

1

PAGES

Next0

Level0

00

000

32

PRIMARY

OVERFLOW

PAGES

h

PAGES

h

0

1

001

01

9

25

32

00

000

10

010

10

50

66

18

34

9

25

001

01

011

11

35

11

43

10

66

10

18

34

010

Next3

100

00

44

36

43

35

31

7

11

011

11

101

11

5

29

37

44

36

100

00

14

30

22

10

110

5

37

29

101

01

22

14

30

31

7

111

11

10

110

45

LH Described as a Variant of EH

11.Hash

- The two schemes are actually quite similar

- Begin with an EH index where directory has N

elements. - Use overflow pages, split buckets round-robin.

- First split is at bucket 0. (Imagine directory

being doubled at this point.) But elements

lt1,N1gt, lt2,N2gt, ... are the same. So, need

only create directory element N, which differs

from 0, now. - When bucket 1 splits, create directory element

N1, etc. - So, directory can double gradually. Also, primary

bucket pages are created in order. If they are

allocated in sequence too (so that finding ith

is easy), we actually dont need a directory!

Voila, LH.