The Hixon Symposium - PowerPoint PPT Presentation

Title:

The Hixon Symposium

Description:

The Hixon Symposium 1948 This was symposium on cognitive science not computer architecture So, why are we reading it? We re reading it due to the stature of ... – PowerPoint PPT presentation

Number of Views:102

Avg rating:3.0/5.0

Title: The Hixon Symposium

1

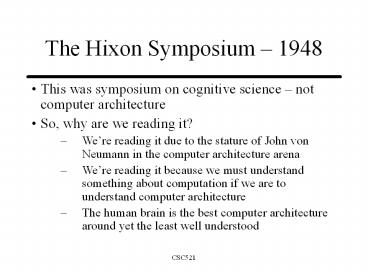

The Hixon Symposium 1948

- This was symposium on cognitive science not

computer architecture - So, why are we reading it?

- Were reading it due to the stature of John von

Neumann in the computer architecture arena - Were reading it because we must understand

something about computation if we are to

understand computer architecture - The human brain is the best computer architecture

around yet the least well understood

2

Why von Neumann?

- von Neumanns presence was many-fold

- Interest/expertise in artificial automata

(computing machines) and the similarity to the

brain - Interest in self-replicating systems

- He hoped to gain an understanding of the human

brain to drive the direction of computer

architecture development - He hoped that through the study of artificial

automata new insights could be gained regarding

the structure/operation of the human brain

3

von Neumanns Goal

- von Neumanns goal was to draw an analogy between

artificial automata and natural organisms through

the eyes of a mathematician - His approach is that of divide-and conquer

understand the elementary units then understand

how the function as a whole - He then proceeded to write off the elementary

units and accept them as black-boxes that

receive an input and deterministically produce an

output The Axiomatic Procedure

4

What is Artificial Automata?

- Artificial Automata Computing Machine

- Long chain of events within a computing machine

Program

5

Fixation on Multiplication

- Multiplication as the gauge

- Use of a computing machine was determined by the

number of multiplications required by the

computation computers are only justified for

problems requiring one million or more

multiplications - Difference between organic systems and artificial

automata is that organic systems can be inexact

yet still arrive at a correct answer. Artificial

automata must perform every step flawlessly or

errors may occur - Consider what happens if an LSB is flipped

- Consider what happens if an MSB is flipped

6

Two Types of Computers

- Analogy (analog)

- Either electrical or mechanical (rotating discs

with angle of rotation representing the analog

value) - Inherently inaccurate (noisey) (The Analogy

Principle) - Use statistics to gain accuracy/reduce the

effects of noise (improve signal-to-noise ratio

where noise are error-prone calculations) - Consider averaging noisy samples

- Noise is reduced as the square root of the number

samples averaged mathematical proof exists

7

Differential Analyzer

- MIT 1930s Vannevar Bush

- First well integrated analog computer

- Rods and wheels

- Solved differential equations

8

Two Types of Computers

- Digital

- At the time machines were decimal all digital

machines built to date operate in this system - Prediction the binary (base 2) system will, in

the end, prove preferablenow under construction - ENIAC was completed in 1945 mathematical table

generator based on decimal data - EDVAC came afterwards programmable machine

based on binary data - Perfectly accurate so long as components work as

designed (The Digital Principle)

9

ENIAC

- First large-scale electronic digital computer

10

Two Types of Computers

- Digital

- Inaccuracies arise due to limitations of word

size similar to those of the analogy principle - Use more bits to gain accuracy/reduce the effects

of noise (improve signal-to-noise ratio where

noise is round-off error) - This is why digital computation may be considered

more powerful than analog - Clearly depends on ones definition of powerful

11

Artificial Automata vs. Organic Processing

- Organic contains both digital and analog

processing - Neuron firings (outputs) are all-or-none

computations (threshold) - Technically speaking they are analog but when

viewed as a black-box they act digital - Internal to the neuron is a chemical humoral

(analog) process - Artificial automata are purely digital (although

analog machines existed von Neumann did not

consider them in this context) - Technically speaking the vacuum tubes of the day

(and the ICs of today) are analog but when viewed

as a black-box they act digital

12

Vacuum Tubes(for those too young to remember)

- Vacuum Tube

- Nixie Tube

13

Artificial Automata vs. Organic Processors

- The digital nature of the both the neuron and the

vacuum tube/IC is a form of Abstraction (which is

good for us Computer Scientists) - Both use switching organs

- Analog ? neuron

- Digital ? mechanical relay or vacuum tube

14

Some Predictions

- It is quite possible that computing machines

will not always be primarily aggregates of

switching organs, but such a development is as

yet quite far in the future. - A development which may lie much closer is that

the vacuum tubes may be displaced from their role

of switch organs in computing machines. This,

too, however, will probably not take place for a

few years yet.

15

Some Factual Statements

- To sum up, about 104 switching organs seem to be

the proper order of magnitude for a computing

machine. In contrast to this, the number of

neurons in the central nervous system has been

variously estimated as something of the order of

1010. - The implication being that the number of

switching organs is the primary drawback but we

know that algorithms (or lack thereof) are

another sticky wicket.

16

Some Beliefs

- Didnt believe that speed was an issue since

neurons are relatively slow compared to vacuum

tubes (and todays ICs.) - Physical comparisons between the ENIAC and the

human brain - 30 tons vs. 1 pound

- Regenerative nature of the organic systems (able

to repair themselves.) - Inability of artificial automata to do so

17

Conclusion

- Inferiority of materials used in artificial

automata is the primary culprit. - If we had better raw materials to work with then

we could build an artificial automata that could

mimic the behaviors of organic processors

18

Limiting Factors on Artificial Automata

- Complication of organic systems

- We dont fully understand them

- We can physically build anything nearly as

complex - Available materials and knowledge of how to use

them

19

Limiting Factors on Artificial Automata

- Lack of a logical theory of automata

- Turing proved that anything that can be described

algorithmically can be computed in a finite

number of steps - But, current machines wont work because of

component failures - Algorithms must be made fault tolerant (ref.

signal-to-noise discussion, above) - Nature does this by making the effect of the

failure unimportant (distributed representation) - Artificial automata must deal with the failure

immediately

20

Limiting Factors on Artificial Automata

- Method of data representation

- Organic systems tend to represent data as a

temporal form e.g. counting over time - Artificial automata tend to represent data as a

spatial form e.g. the binary number system

21

Limiting Factors on Artificial Automata

- Fault tolerance

- Organic systems tend to minimize the importance

of isolated errors - In many cases they are self-correcting

- Artificial automata tend to be hindered by

isolated errors and disabled by multiple errors - They must be detected as soon as they occur so as

to not adversely affect later results

22

Limiting Factors on Artificial Automata

- Intellectual inadequacy we just dont know how

to do it! - McCulloch-Pitts tied Turings work to artificial

neural networks - That is, if you can describe it, we can implement

it with Artificial Neural Networks (formal

neural networks) - The underlying problem is the specification of

the algorithm!!! - von Neumann alludes to training a pattern

recognizer through the phrase complete

catalogue but admits the size is prohibitive - Does this contradict the definition of computer

that says the answers are not stored in the

system?

23

Limiting Factors on Artificial Automata

- Conclusion

- McCulloch-Pitts work is important but does not

get us to an intelligent machine

24

The Turing Machine

- The ultimate computer architecture

25

Self Replicating Machines

- von Neumann then proceeds to discuss machines

that can create copies of themselves as a means

for discussing complexity - Must the building machine be more complex than

the one being built? - His goal was to further the study of automata

26

So, Why was von Neumann at a Conference on

Cerebral Processing?

- reflects merely the present, imperfect state of

our technology a state that will presumably

improve with time - This is why we study computer architecture.

- To come up with an artificial automata that will

get us closer to that of an organic processor!

![get [PDF] DOWNLOAD A Symposium of Connectivism: Definitive Final Form Editio PowerPoint PPT Presentation](https://s3.amazonaws.com/images.powershow.com/10059427.th0.jpg?_=20240619078)