CS 240A: Solving Ax b in parallel - PowerPoint PPT Presentation

1 / 59

Title:

CS 240A: Solving Ax b in parallel

Description:

The magic: Short recurrences. . . A is symmetric = can get next residual and direction ... Partition / reorder matrix to reduce communication. x. y. P0. P1. P2 ... – PowerPoint PPT presentation

Number of Views:56

Avg rating:3.0/5.0

Title: CS 240A: Solving Ax b in parallel

1

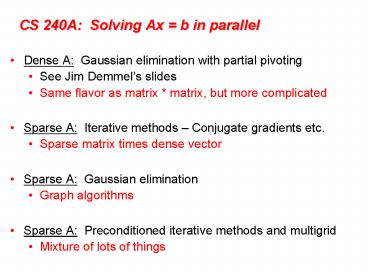

CS 240A Solving Ax b in parallel

- Dense A Gaussian elimination with partial

pivoting - See Jim Demmels slides

- Same flavor as matrix matrix, but more

complicated - Sparse A Iterative methods Conjugate

gradients etc. - Sparse matrix times dense vector

- Sparse A Gaussian elimination

- Graph algorithms

- Sparse A Preconditioned iterative methods and

multigrid - Mixture of lots of things

2

Data structures

- Full

- 2-dimensional array of real or complex numbers

- (nrowsncols) memory

- Sparse

- compressed column storage

- about (1.5nzs .5ncols) memory

D

3

The Landscape of Axb Solvers

More Robust

Less Storage (if sparse)

4

Complexity of linear solvers

Time to solve model problem (Poissons equation)

on regular mesh

5

Conjugate gradient iteration for Ax b

x0 0 approx solution r0 b

residual b - Ax d0 r0

search direction for k 1, 2, 3, . .

. xk xk-1

new approx solution rk

new residual dk

new search direction

6

Conjugate gradient iteration for Ax b

x0 0 approx solution r0 b

residual b - Ax d0 r0

search direction for k 1, 2, 3, . .

. ak step

length xk xk-1 ak dk-1

new approx solution rk

new residual dk

new

search direction

7

Conjugate gradient iteration for Ax b

x0 0 approx solution r0 b

residual b - Ax d0 r0

search direction for k 1, 2, 3, . .

. ak (rTk-1rk-1) / (dTk-1Adk-1) step

length xk xk-1 ak dk-1

new approx solution rk

new residual

dk

new search direction

8

Conjugate gradient iteration for Ax b

x0 0 approx solution r0 b

residual b - Ax d0 r0

search direction for k 1, 2, 3, . .

. ak (rTk-1rk-1) / (dTk-1Adk-1) step

length xk xk-1 ak dk-1

new approx solution rk

new residual ßk

(rTk rk) / (rTk-1rk-1) dk rk ßk dk-1

new search direction

9

Conjugate gradient iteration for Ax b

x0 0 approx solution r0 b

residual b - Ax d0 r0

search direction for k 1, 2, 3, . .

. ak (rTk-1rk-1) / (dTk-1Adk-1) step

length xk xk-1 ak dk-1

new approx solution rk rk-1 ak

Adk-1 new residual ßk

(rTk rk) / (rTk-1rk-1) dk rk ßk dk-1

new search direction

10

Conjugate gradient iteration

x0 0, r0 b, d0 r0 for k 1, 2,

3, . . . ak (rTk-1rk-1) / (dTk-1Adk-1)

step length xk xk-1 ak dk-1

approx solution rk rk-1 ak

Adk-1 residual ßk

(rTk rk) / (rTk-1rk-1) improvement dk

rk ßk dk-1

search direction

- One matrix-vector multiplication per iteration

- Two vector dot products per iteration

- Four n-vectors of working storage

11

Conjugate gradient Krylov subspaces

- Eigenvalues Av ?v ?1, ?2 ,

. . ., ?n - Cayley-Hamilton theorem

- (A ?1I)(A ?2I) (A ?nI) 0

- Therefore S ciAi 0 for some ci

- so A-1 S (ci/c0) Ai1

- Krylov subspace

- Therefore if Ax b, then x A-1 b and

- x ? span (b, Ab, A2b, . . ., An-1b) Kn (A, b)

0 ? i ? n

1 ? i ? n

12

Conjugate gradient Orthogonal sequences

- Krylov subspace Ki (A, b) span (b, Ab, A2b, .

. ., Ai-1b) - Conjugate gradient algorithm for i 1, 2, 3,

. . . find xi ? Ki (A, b) such that ri

(b Axi) ? Ki (A, b) - Notice ri ? Ki1 (A, b), so ri ? rj for all

j lt i - Similarly, the directions are

A-orthogonal (xi xi-1 )TA (xj xj-1 ) 0 - The magic Short recurrences. . . A is symmetric

gt can get next residual and direction from

the previous one, without saving them all.

13

Conjugate gradient Convergence

- In exact arithmetic, CG converges in n steps

(completely unrealistic!!) - Accuracy after k steps of CG is related to

- consider polynomials of degree k that are equal

to 1 at 0. - how small can such a polynomial be at all the

eigenvalues of A? - Thus, eigenvalues close together are good.

- Condition number ?(A) A2 A-12

?max(A) / ?min(A) - Residual is reduced by a constant factor by

O(?1/2(A)) iterations of CG.

14

Conjugate gradient Parallel implementation

- Lay out matrix and vectors by rows

- Hard part is matrix-vector product

y Ax - Algorithm

- Each processor j

- Broadcast x(j)

- Compute y(j) A(j,)x

- May send more of x than needed

- Partition / reorder matrix to reduce communication

15

Other Krylov subspace methods

- Nonsymmetric linear systems

- GMRES for i 1, 2, 3, . . . find xi ? Ki

(A, b) such that ri (Axi b) ? Ki (A,

b)But, no short recurrence gt save old vectors

gt lots more space - (Usually restarted every k iterations to use

less space.) - BiCGStab, QMR, etc.Two spaces Ki (A, b) and Ki

(AT, b) w/ mutually orthogonal basesShort

recurrences gt O(n) space, but less robust - Convergence and preconditioning more delicate

than CG - Active area of current research

- Eigenvalues Lanczos (symmetric), Arnoldi

(nonsymmetric)

16

Solving Axb in parallel

- Dense A Gaussian elimination with partial

pivoting - Same flavor as matrix matrix, but more

complicated - Sparse A Iterative methods Conjugate

gradients etc. - Sparse matrix times dense vector

- Sparse A Gaussian elimination

- Graph algorithms

- Sparse A Preconditioned iterative methods and

multigrid - Mixture of lots of things

17

Complexity of linear solvers

Time to solve model problem (Poissons equation)

on regular mesh

18

Complexity of direct methods

Time and space to solve any problem on any

well-shaped finite element mesh

19

Column Cholesky Factorization

- for j 1 n

- for k 1 j-1

- cmod(j,k)

- for i j n

- A(i,j) A(i,j) A(i,k)A(j,k)

- end

- end

- cdiv(j)

- A(j,j) sqrt(A(j,j))

- for i j1 n

- A(i,j) A(i,j) / A(j,j)

- end

- end

- Column j of A becomes column j of L

20

Sparse Column Cholesky Factorization

- for j 1 n

- for k lt j with A(j,k) nonzero

- sparse cmod(j,k)

- A(jn, j) A(jn, j) A(jn, k)A(j, k)

- end

- sparse cdiv(j)

- A(j,j) sqrt(A(j,j))

- A(j1n, j) A(j1n, j) / A(j,j)

- end

- Column j of A becomes column j of L

21

Graphs and Sparse Matrices Cholesky

factorization

Fill new nonzeros in factor

Symmetric Gaussian elimination for j 1 to n

add edges between js higher-numbered

neighbors

G(A)chordal

G(A)

22

Elimination Tree

G(A)

T(A)

Cholesky factor

- T(A) parent(j) min i gt j (i, j) in

G(A) - parent(col j) first nonzero row below diagonal

in L - T describes dependencies among columns of factor

- Can compute G(A) easily from T

- Can compute T from G(A) in almost linear time

23

Fill-reducing matrix permutations

- Minimum degree

- Eliminate row/col with fewest nzs, add fill,

repeat - Theory can be suboptimal even on 2D model

problem - Practice often wins for medium-sized problems

- Nested dissection

- Find a separator, number it last, proceed

recursively - Theory approx optimal separators gt approx

optimal fill and flop count - Practice often wins for very large problems

- Banded orderings (Reverse Cuthill-McKee, Sloan, .

. .) - Try to keep all nonzeros close to the diagonal

- Theory, practice often wins for long, thin

problems - Best modern general-purpose orderings are ND/MD

hybrids.

24

GEPP Gaussian elimination w/ partial pivoting

P

x

- PA LU

- Sparse, nonsymmetric A

- Columns may be preordered for sparsity

- Rows permuted by partial pivoting (maybe)

- High-performance machines with memory hierarchy

25

Column Elimination Tree

T?(A)

A

chol(ATA)

- Elimination tree of ATA (if no cancellation)

- Depth-first spanning tree of G?(A)

- Represents column dependencies in various

factorizations

26

Column Dependencies in PA LU

- If column j modifies column k, then j ? T?k.

27

Shared Memory SuperLU-MT

- 1D data layout across processors

- Dynamic assignment of panel tasks to processors

- Task tree follows column elimination tree

- Two sources of parallelism

- Independent subtrees

- Pipelining dependent panel tasks

- Single processor BLAS 2.5 SuperLU kernel

- Good speedup for 8-16 processors

- Scalability limited by 1D data layout

28

SuperLU-MT Performance Highlight (1999)

- 3-D flow calculation (matrix EX11, order 16614)

29

See Sherry Lis slides on SuperLU-MT

30

SuperLU-dist GE with static pivoting Li,

Demmel

- Target Distributed-memory multiprocessors

- Goal No pivoting during numeric factorization

- Permute A unsymmetrically to have large elements

on the diagonal (using weighted bipartite

matching) - Scale rows and columns to equilibrate

- Permute A symmetrically for sparsity

- Factor A LU with no pivoting, fixing up small

pivots - if aii lt e A then replace aii by

?e1/2 A - Solve for x using the triangular factors Ly

b, Ux y - Improve solution by iterative refinement

31

Row permutation for heavy diagonal Duff,

Koster

1

5

2

3

4

1

2

3

4

5

A

- Represent A as a weighted, undirected bipartite

graph (one node for each row and one node for

each column) - Find matching (set of independent edges) with

maximum product of weights - Permute rows to place matching on diagonal

- Matching algorithm also gives a row and column

scaling to make all diag elts 1 and all

off-diag elts lt1

32

Iterative refinement to improve solution

- Iterate

- r b Ax

- backerr maxi ( ri / (Ax b)i )

- if backerr lt e or backerr gt lasterr/2 then

stop iterating - solve LUdx r

- x x dx

- lasterr backerr

- repeat

- Usually 0 3 steps are enough

33

SuperLU-dist Distributed static data structure

Process(or) mesh

Block cyclic matrix layout

34

See Sherry Lis slides on SuperLU-dist

35

Solving Axb in parallel

- Dense A Gaussian elimination with partial

pivoting - Same flavor as matrix matrix, but more

complicated - Sparse A Iterative methods Conjugate

gradients etc. - Sparse matrix times dense vector

- Sparse A Gaussian elimination

- Graph algorithms

- Sparse A Preconditioned iterative methods and

multigrid - Mixture of lots of things

36

Conjugate gradient iteration

x0 0, r0 b, d0 r0 for k 1, 2,

3, . . . ak (rTk-1rk-1) / (dTk-1Adk-1)

step length xk xk-1 ak dk-1

approx solution rk rk-1 ak

Adk-1 residual ßk

(rTk rk) / (rTk-1rk-1) improvement dk

rk ßk dk-1

search direction

- One matrix-vector multiplication per iteration

- Two vector dot products per iteration

- Four n-vectors of working storage

37

Conjugate gradient Convergence

- In exact arithmetic, CG converges in n steps

(completely unrealistic!!) - Accuracy after k steps of CG is related to

- consider polynomials of degree k that are equal

to 1 at 0. - how small can such a polynomial be at all the

eigenvalues of A? - Thus, eigenvalues close together are good.

- Condition number ?(A) A2 A-12

?max(A) / ?min(A) - Residual is reduced by a constant factor by

O(?1/2(A)) iterations of CG.

38

Preconditioners

- Suppose you had a matrix B such that

- condition number ?(B-1A) is small

- By z is easy to solve

- Then you could solve (B-1A)x B-1b instead of Ax

b - Each iteration of CG multiplies a vector by B-1A

- First multiply by A

- Then solve a system with B

39

Preconditioned conjugate gradient iteration

x0 0, r0 b, d0 B-1 r0, y0

B-1 r0 for k 1, 2, 3, . . . ak

(yTk-1rk-1) / (dTk-1Adk-1) step length xk

xk-1 ak dk-1 approx

solution rk rk-1 ak Adk-1

residual yk B-1 rk

preconditioning

solve ßk (yTk rk) / (yTk-1rk-1)

improvement dk yk ßk dk-1

search direction

- One matrix-vector multiplication per iteration

- One solve with preconditioner per iteration

40

Choosing a good preconditioner

- Suppose you had a matrix B such that

- condition number ?(B-1A) is small

- By z is easy to solve

- Then you could solve (B-1A)x B-1b instead of Ax

b - B A is great for (1), not for (2)

- B I is great for (2), not for (1)

- Domain-specific approximations sometimes work

- B diagonal of A sometimes works

- Better blend in some direct-methods ideas. . .

41

Incomplete Cholesky factorization (IC, ILU)

- Compute factors of A by Gaussian elimination,

but ignore fill - Preconditioner B RTR ? A, not formed explicitly

- Compute B-1z by triangular solves (in time

nnz(A)) - Total storage is O(nnz(A)), static data structure

- Either symmetric (IC) or nonsymmetric (ILU)

42

Incomplete Cholesky and ILU Variants

- Allow one or more levels of fill

- unpredictable storage requirements

- Allow fill whose magnitude exceeds a drop

tolerance - may get better approximate factors than levels of

fill - unpredictable storage requirements

- choice of tolerance is ad hoc

- Partial pivoting (for nonsymmetric A)

- Modified ILU (MIC) Add dropped fill to

diagonal of U or R - A and RTR have same row sums

- good in some PDE contexts

43

Incomplete Cholesky and ILU Issues

- Choice of parameters

- good smooth transition from iterative to direct

methods - bad very ad hoc, problem-dependent

- tradeoff time per iteration (more fill gt more

time) vs of iterations (more

fill gt fewer iters) - Effectiveness

- condition number usually improves (only) by

constant factor (except MIC for some problems

from PDEs) - still, often good when tuned for a particular

class of problems - Parallelism

- Triangular solves are not very parallel

- Reordering for parallel triangular solve by graph

coloring

44

Sparse approximate inverses

- Compute B-1 ? A explicitly

- Minimize B-1A I F (in parallel, by

columns) - Variants factored form of B-1, more fill, . .

- Good very parallel

- Bad effectiveness varies widely

45

Multigrid (preview of next lecture)

- For a PDE on a fine mesh, precondition using a

solution on a coarser mesh - Use idea recursively on hierarchy of meshes

- Solves the model problem (Poissons eqn) in

linear time! - Often useful when hierarchy of meshes can be

built - Hard to parallelize coarse meshes well

- This is just the intuition lots of theory and

technology

46

The Landscape of Axb Solvers

More Robust

Less Storage (if sparse)

47

Complexity of linear solvers

Time to solve model problem (Poissons equation)

on regular mesh

48

EXTRA SLIDES

49

Column Preordering for Sparsity

Q

P

- PAQT LU Q preorders columns for sparsity, P

is row pivoting - Column permutation of A ? Symmetric permutation

of ATA (or G?(A)) - Symmetric ordering Approximate minimum degree

Amestoy, Davis, Duff - But, forming ATA is expensive (sometimes bigger

than LU). - Solution ColAMD ordering ATA with data

structures based on A

50

Column AMD Davis, G, Ng, Larimore

Matlab 6

row

col

A

I

row

AT

0

col

G(aug(A))

A

aug(A)

- Eliminate row nodes of aug(A) first

- Then eliminate col nodes by approximate min

degree - 4x speed and 1/3 better ordering than Matlab-5

min degree, 2x speed of AMD on ATA - Question Better orderings based on aug(A)?

51

Symmetric-pattern multifrontal factorization

52

Symmetric-pattern multifrontal factorization

- For each node of T from leaves to root

- Sum own row/col of A with childrens Update

matrices into Frontal matrix - Eliminate current variable from Frontal matrix,

to get Update matrix - Pass Update matrix to parent

53

Symmetric-pattern multifrontal factorization

- For each node of T from leaves to root

- Sum own row/col of A with childrens Update

matrices into Frontal matrix - Eliminate current variable from Frontal matrix,

to get Update matrix - Pass Update matrix to parent

54

Symmetric-pattern multifrontal factorization

- For each node of T from leaves to root

- Sum own row/col of A with childrens Update

matrices into Frontal matrix - Eliminate current variable from Frontal matrix,

to get Update matrix - Pass Update matrix to parent

55

Symmetric-pattern multifrontal factorization

T(A)

56

Symmetric-pattern multifrontal factorization

- Really uses supernodes, not nodes

- All arithmetic happens on dense square matrices.

- Needs extra memory for a stack of pending update

matrices - Potential parallelism

- between independent tree branches

- parallel dense ops on frontal matrix

57

MUMPS distributed-memory multifrontalAmestoy,

Duff, LExcellent, Koster, Tuma

- Symmetric-pattern multifrontal factorization

- Parallelism both from tree and by sharing dense

ops - Dynamic scheduling of dense op sharing

- Symmetric preordering

- For nonsymmetric matrices

- optional weighted matching for heavy diagonal

- expand nonzero pattern to be symmetric

- numerical pivoting only within supernodes if

possible (doesnt change pattern) - failed pivots are passed up the tree in the

update matrix

58

Question Preordering for static pivoting

- Less well understood than symmetric factorization

- Symmetric bottom-up, top-down, hybrids

- Nonsymmetric top-down just starting to replace

bottom-up - Symmetric best ordering is NP-complete, but

approximation theory is based on graph

partitioning (separators) - Nonsymmetric no approximation theory is known

partitioning is not the whole story

59

Remarks on (nonsymmetric) direct methods

- Combinatorial preliminaries are important

ordering, bipartite matching, symbolic

factorization, scheduling - not well understood in many ways

- also, mostly not done in parallel

- Multifrontal tends to be faster but use more

memory - Unsymmetric-pattern multifrontal

- Lots more complicated, not simple elimination

tree - Sequential and SMP versions in UMFpack and WSMP

(see web links) - Distributed-memory unsymmetric-pattern

multifrontal is a research topic - Not mentioned symmetric indefinite problems

- Direct-methods technology is also needed in

preconditioners for iterative methods